WebMCP Could Change How Agents Use Your Website

/ 8 min read

Summary

While SEOs have long debated whether we should be optimizing for search engines or humans (shockingly, it's both), that paradigm. The practical question is what this changes for SEO, content quality, and AI search visibility.

There is a specific kind of anxiety that comes with working in the digital space: the fear of wasting time on a shiny object. Search history is full of features that looked important early, pulled teams into implementation work, and then disappeared before they became durable operating advantages. A useful companion note is X Robots Tag, because it looks at a nearby part of the same system.

The more sustainable rhythm is to wait for wider, but still early, adoption: watch the first movers, learn from their mistakes, and move quickly once the signal is clearer. The difficult part is recognizing the rare moments that are not just trends, but structural changes in how discovery works.

Every so often, a shift occurs that is not just a trend, but a fundamental change in the landscape. The people who read the original PageRank paper and immediately realized the value of building links are a great example. They didn't just follow a tactic; they understood a shift in how information was valued. WebMCP feels like one of those moments. It isn't just another update to search or a new way to get "AI visibility." It is a change in the very nature of discoverability and who, or what, is doing the discovering.

Coming soon: Non human engagement

For years, the central debate in SEO has been whether we should optimize for search engines or for human beings. The answer, of course, has always been both. But that paradigm is shifting. We are moving toward a world where the primary entity discovering your content isn't a person, but an LLM or an agentic system.

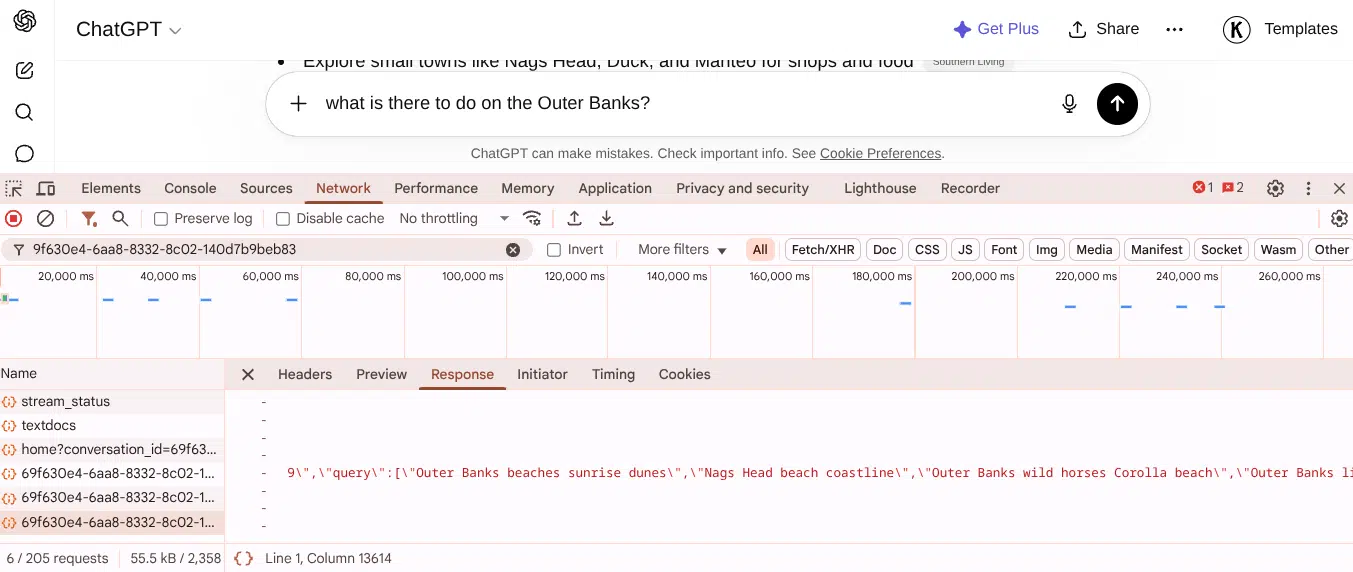

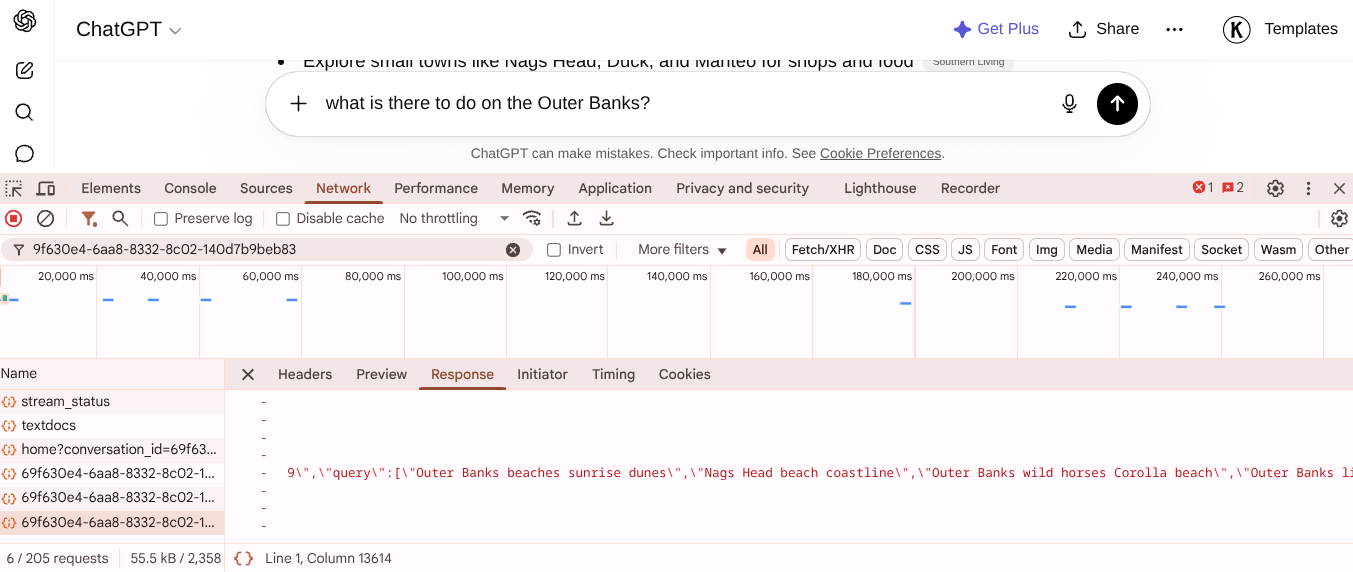

This isn't a futuristic prediction; it is already happening. When you use a tool like ChatGPT, the system isn't just returning a list of links. It is making decisions, performing supplemental searches, and synthesizing conclusions. The agent is planning the journey on your behalf. The output you see is entirely dependent on what the agent retrieves and how it interprets that data. If you look at the DevTools during these sessions, you can actually see the "fanout" queries the agent is running to fill in the gaps. The same pattern also shows up in pipeline gates, where the practical question is how the signal becomes visible.

To understand the scale of this, it helps to look at the evolution of discovery in stages:

Discovery v1: Direct experience. People found things through firsthand interaction and word of mouth. Discovery v2: Recorded knowledge. Libraries, books, and newspapers became the primary discovery points. Discovery v3: The scalable web. The explosion of digital information led to the rise of directories and, eventually, search engines. Discovery v4 (Current): The blended era. LLMs have integrated into search, providing a hybrid experience where an assistant does the legwork, though the human is still very much in the loop. Discovery v5: The agentic era. This is where the agent doesn't just find information but interacts with the world to complete tasks.

The trust ratchet only turns one way

The transition to this agentic era depends entirely on trust. Think about your own behavior with AI Overviews. Do you trust the answers more now than you did the day they launched? Probably. While you might not trust them 100% of the time, there is a growing list of queries where you are happy to accept the AI's answer and end your search right there.

This is the "trust ratchet." For low risk, simple queries, like a dinner recipe or the benefits of a specific vitamin, the barrier to trust is low. As these systems improve, that boundary moves. We start trusting them with slightly higher stakes information. While most of us wouldn't trust an AI to handle complex tax law or critical health diagnoses yet, the line continues to shift outward.

As trust increases, we stop asking agents to just tell us things and start asking them to do things for us. The risk of an agent incorrectly reordering a few grocery items is low, but the convenience is high. The value of an agent that can monitor flight and hotel combinations in real time to find a refundable deal within a specific budget is immense. Even the idea of an autonomous vehicle taking a family to a destination while they sleep or play games is a compelling value proposition.

Many people believe they will never hand over their autonomy to an agent. However, history suggests otherwise. People said the same thing about entering credit card numbers on websites, using GPS instead of maps, and relying on smartphones. The path usually moves from skepticism to reluctant acceptance, and finally to total integration.

What does this have to do with WebMCP?

This is where the conversation moves from theory to technical implementation. To enable "Discovery v5," agents need a way to interact with the web that is more reliable than simply scraping HTML. While MCP servers and skills files exist, they often have high barriers to entry and only work in limited contexts.

WebMCP is different because it is designed as a browser native web standard. It is currently a W3C Community Group Draft and has already appeared in early previews, such as Chrome 146 beta. The goal of WebMCP is to provide websites with a structured, standardized way to expose actions directly to AI agents. This removes the need for the agent to "guess" how a site works or rely on brittle automation that breaks every time a CSS class changes.

Crucially, this isn't a proprietary project by a single company. The specification is being co authored by engineers from both Google and Microsoft. When the two dominant forces in browser and OS ecosystems agree on a standard for how AI agents should interact with the web, it is a signal that the industry is moving in this direction.

Declarative vs. imperative: You already know this distinction

WebMCP introduces two APIs, but for most site owners and technical SEOs, the Declarative API is the one that requires immediate attention. If you've worked with structured data or HTML, the concept will feel familiar.

The Declarative API allows you to annotate your existing HTML forms with specific attributes. These attributes describe what the form does and what the individual fields represent. Once these annotations are in place, the browser automatically translates them into a structured tool that any compatible AI agent can call.

The beauty of this approach is that it doesn't break the user experience. The form continues to function exactly as it always has for human visitors. There is no need to build a separate "AI version" of your site. Instead, you are simply adding a layer of machine readable metadata that tells the agent, "This is a booking form, and this field is for the date." While the exact attribute names are still being finalized in the specification process, the core logic is set.

What the agent sees: Before and after

To understand the practical impact, consider a standard booking or contact form used by almost every service based business. In a traditional setup, an AI agent has to scrape the page, try to identify the input fields, and guess the purpose of the form based on labels and placeholders. This is prone to error.

With WebMCP declarative annotations, the process changes. By adding attributes like toolname and tooldescription to the form, and toolparamdescription to the input fields, you are providing a map. The agent no longer has to guess; it is explicitly told what the tool is and what data is required to execute the action.

You aren't rebuilding your interface for machines. You are making your existing interface legible to them. It is the difference between asking a stranger to guess how to use a complex machine and providing them with a clear, printed manual.

Why this matters for your sites specifically

When we enter the world of agentic discovery, the way users "arrive" at your business changes. Consider the types of requests a user might give an agent in the near future:

"Find me a technical SEO consultant who avoids LinkedIn style clichés and has an opening for a call next Tuesday.". "Compare three different AI observability tools and tell me which one actually solves my specific problem rather than just offering a chatbot.". "Find a local contra dance this Friday, verify if beginners are welcome, and add it to my calendar if the band looks good.".

In these scenarios, the agent is the gatekeeper. Which site gets the engagement? It will be the one the agent can interact with most confidently and with the least amount of friction. If your competitor's site explicitly declares its capabilities and booking process via WebMCP, and yours requires the agent to scrape and guess, the agent will naturally gravitate toward the path of least resistance.

The window is open, but not forever

Early adoption deserves skepticism by default, but some shifts are different in kind rather than degree. Schema markup, HTTPS, and mobile first indexing each created a window where early movers gained an advantage because they understood the underlying change, not just the tactic.

WebMCP is currently in the draft stage, but the momentum is significant. It is already in Chrome beta and has been integrated into Cloudflare's infrastructure. This suggests that the plumbing is being laid before the general public even realizes the shift is happening.

The goal isn't to rush in blindly, but to prepare your technical foundation. By understanding how to make your site's actions declarative, you ensure that when the "trust ratchet" finally clicks into place for the majority of users, your business is already legible to the agents they are using to navigate the world.

Practical next steps

The useful part is not only the idea itself, but the operating habit behind it. Use it as a checklist for decisions: what deserves attention now, what should be monitored, what needs a stronger evidence base, and what can wait until the system has more scale.

Comments

Comments are published automatically. Links are not allowed inside comments.